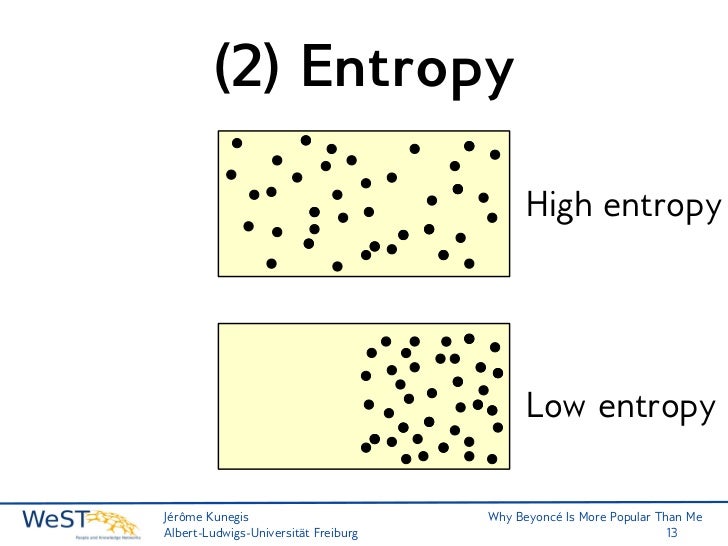

And while at the other end of the spectrum, too much entropy is associated with a psychotic detachment from reality, a certain degree of entropy provides the sort of cognitive flexibility associated with creativity and divergent thinking. The suppressed entropy of this state, in fact, makes it possible for us to have a stable ongoing sense of self, being responsible for “metacognitive functions including reality testing and self-awareness.” The sweet spot is limited, however, because too high a degree of predictability leads to rigid, inflexible thinking associated with repetitive and ruminative disorders such as depression, addictions, and OCD. Moving from the high to the low entropy ends of the spectrum, uncertainty decreases and predictability increases until we reach a transition zone that Carhart-Harris calls “criticality.” Just beyond the transition point lies a sweet spot of sorts where uncertainty is low enough, and our perceptions of the world are predictable enough, to allow us to go about our normal waking business.

3 H 2 + N 2 2 NH 3 (g) H 46.2 kJ S 389 J K 1 G 16.4 kJ at 298 K The decrease in moles of gas in the Haber ammonia synthesis drives the entropy change negative, making the reaction spontaneous only. This 'entropy sink' is whats allow life on Earth. A higher degree of cognitive certainty, or predictability, is required of us as we go about our normal day-to-day routines. The positive entropy change is due mainly to the greater mass of CO 2 molecules compared to those of O 2. this is about 20 times larger than the entropy received from the sun, because Earth temperature is about 1/20th of the temperature of the sun. A low entropy car, and quite ironic given that the £30 Pi has a good TRNG inside it.This high entropy state of consciousness, characteristic of infancy, REM dreaming, and psychosis, is obviously not well-suited to meeting the cognitive demands placed upon us by our normal waking lives. Since entropy is primarily dealing with energy, it’s intrinsically a thermodynamic property (there isn’t a non-thermodynamic entropy). This is a technical guide to stealing Tesla Model S cars by exploiting 40/24 bit fixed keys with a Time-Memory Trade-Off attack using a kiddies Raspberry Pi. At Low Entropy, we believe changing the world starts with changing ourselves. And that can lead to weak keys like "secret" simply being hard coded into the firmware.Įg.: simply spending £K's on kit without entropy is not always adequate either. This implies near-optimal performance for inherently low-dimensional structures embedded in high-dimensional spaces, including hidden-layer outputs of deep neural networks (DNN), which can be used to estimate mutual information (MI) in DNNs. The cheapest of these do not have any form of embedded entropy source.

These are often in short supply with many internet of things devices built to a price point and running on batteries. It took a device, investment £s and consumes space and electricity. Clearly that's much harder to guess.īut the latter was also much harder to generate. There are $2^$ possible values of this length. They may be sent by default on every client request, irrespective of the server Accept-CH response header, depending on the permission policy. Entropy is a measure of the number of possible choices from which our secret value could have been drawn, and it’s a way to measure hardness-to-guess, strength of passwords, and it’s what people. The latter is 1024 bits of entropy pulled from a true random number generator (TRNG). The low entropy hints are those that dont give away much information that might be used to create a fingerprinting for a user. If applied to our universe, one can conclude that the entropy of our universe must necessarily increase over time (or, improbably, stay constant), resulting in the final heat-death of our universe. It's also a common word and can be found in hackers' dictionaries. the total entropy of an isolated system can only increase over time. Password entropy calculations are notoriously difficult, but you can see that it's a bit short. The absolute entropy of a pure substance at a given temperature is the sum of all the entropy it would acquire on warming from absolute zero. By example, consider these two passwords/keys:- When Secondary pool has low entropy, some portion of entropy from Primary pool is extracted (again with using of cryptographic one-way functions), then part of it is remixed back into primary pool and part of it mixed to the consuming pool.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed